Privacy beyond the person: contextual privacy in computer vision

In our last blog post, “Opt-In Vision”, we talked about how images can be transformed to retain utility for a specific task while minimizing sensitive information. The focus was primarily on protecting the person in the image: how can we hide sensitive attributes while still extracting useful data, like pedestrian counts?

However, when deploying computer vision in industrial settings, the privacy risks often extend far beyond just the individuals captured in the footage. For example, cameras on a factory floor might capture what specific tools are being used, the layout of the workspace, or proprietary manufacturing workflows. This information can be highly sensitive from a business perspective.

In this post, we explore the next step: contextual privacy. How do we protect not just the people in the image, but the broader background context that might leak sensitive corporate Intellectual Property (IP)?

The Setting: Multi-Camera Ergonomic Monitoring

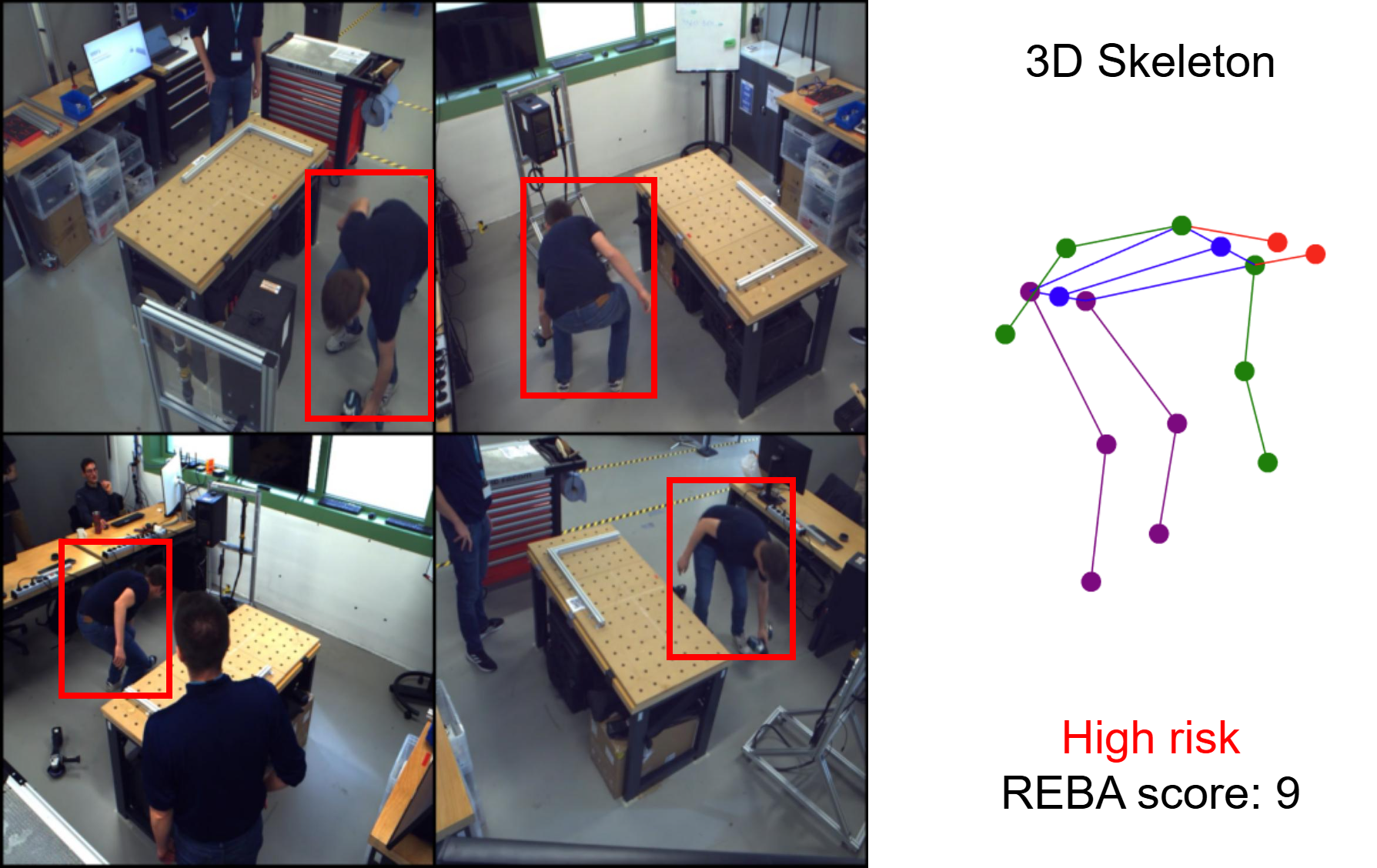

To test this, we conducted a case study on multi-camera ergonomic monitoring in an industrial setting, collaborating with Flanders Make in the context of the Flanders AI Research Program.

The goal was to monitor workers’ postures to improve workplace ergonomics, while ensuring the system respects both personal and contextual privacy. In our setup, workers are filmed using 4 synchronized cameras. We perform keypoint (joint) estimation on all camera views, and then fuse those keypoints to get a 3D representation of the worker’s posture. From this 3D skeleton, we calculate a standard ergonomic risk score (REBA).

The challenge? We want to calculate these ergonomic scores in the cloud without ever exposing the raw video of the factory floor, the workers’ identities, or the specific tools they are using.

The Upgrade: Learning to Hide the Context

In our previous post, we introduced an adversarial framework: an obfuscator that learns to strip away irrelevant information, and a deobfuscator that tries to reconstruct the original from the obfuscated. The obfuscator learns to minimize the data such that only keypoint estimation related information is preserved.

Overview of the privacy-preserving pipeline. Raw video is transformed at the edge, meaning only safe, obfuscated data is transmitted for 3D posture analysis.

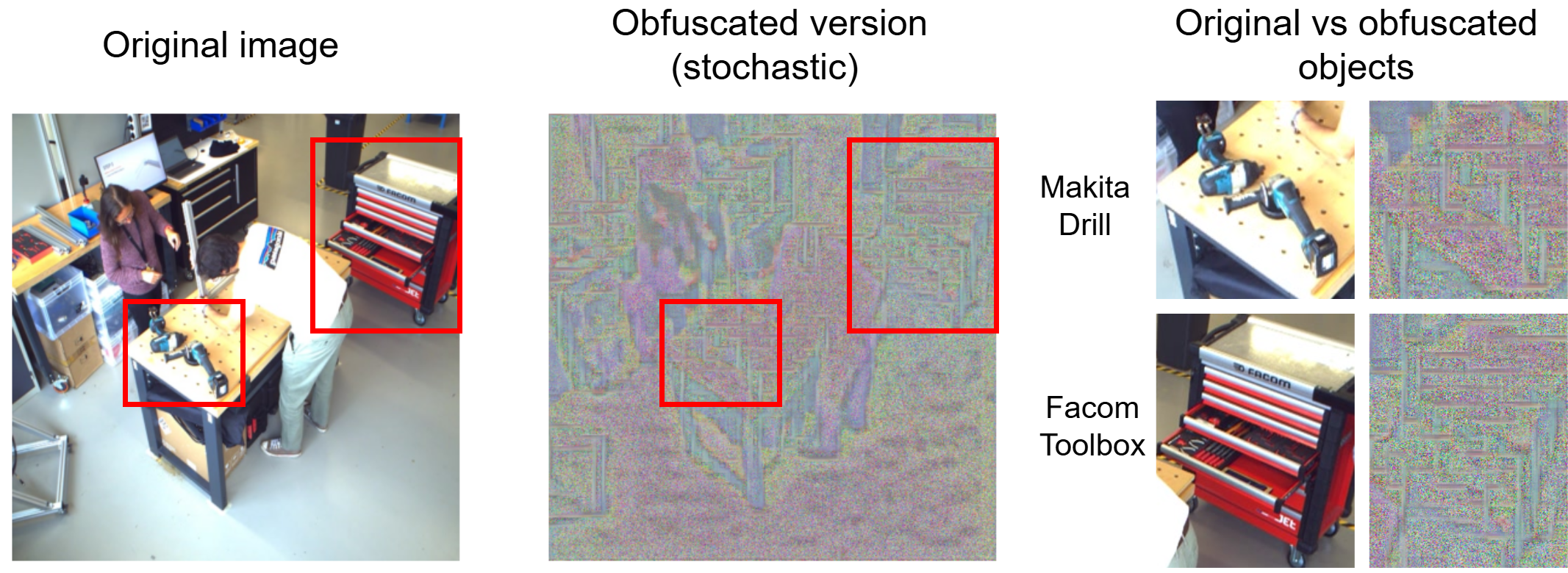

To make this application aware of contextual privacy, we deploy a similar setup directly at the edge (on the camera itself). But this time, we introduced a stochastic (probabilistic) noise component to the obfuscator, increasing the level of noise injected into the image.

Instead of just applying a uniform filter, our new network learns to explicitly inject targeted, randomized visual noise into the image. Because the network’s goal is to maintain accuracy only for the human pose estimation task, it learns that the background is completely useless. As a result, it preferentially alters the background, and the sensitive tools within it, with heavy distortion, making it extra difficult for anyone to reconstruct the original scene.

Putting It to the Test: Tools vs. Postures

We evaluated this new system on two critical fronts: Privacy (does it hide the IP-sensitive aspects?) and Utility (can we still measure ergonomics?). To test the privacy, we intentionally placed identifiable, branded tools (like a Makita drill and a Facom toolbox) in our dataset.

1. Contextual Privacy: Hiding the IP

We trained an AI object detector specifically to hunt for those branded tools. On the raw video, the detector found them easily. But when we ran it on our stochastically obfuscated images, detection plummeted to near zero.

Even when we created an “informed adversary”, an AI-model specifically trained on the obfuscated images to try and see through the noise, our method successfully masked the tools. As you can see below, the human silhouette remains visible enough for a machine to track, but the contextual details are destroyed.

2. Utility: Ergonomic Accuracy

Protecting the background only matters if the primary task still works. In our experiments, adding strong background noise still preserved pose estimation performance well enough for ergonomic assessment. This suggests the method can maintain useful utility while improving contextual privacy, even though many privacy approaches involve a tradeoff between protection and task accuracy.

As shown in the chart above, standard methods (like blurring, pixelation, or basic noise) often push results toward one of two extremes: either the image becomes harder to use for tracking, or it remains relatively easier to interpret. Our Stochastic Obfuscator trends toward a more balanced region, with stronger contextual privacy while retaining useful accuracy for analysis.

For the final 3D ergonomic risk scores (REBA), results from obfuscated footage remained close to those from raw video. Most assessments stayed within the same broader risk category, with an average score difference of 1.46 out of 10 (full REBA breakdown is in the paper).

Bringing AI into industrial spaces doesn’t have to mean sacrificing worker privacy or company trade secrets. By shifting our focus to holistically hide the context of a scene, we can build computer vision tools that are safe by design.

Find the full version of our paper, with more detailed metrics and insights into the methodology, here: https://www.sciencedirect.com/science/article/pii/S1077314226000421.